INTERVIEW 2019

with DNEG’s Frederik Lillelund

by Jessica Fernandes, Spark CG Society

August 15, 2019

When Nebulae and Aliens Collide

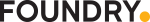

Men in Black: International, the latest installment of the franchise, takes us on a global journey of mystery, redemption, FX and aliens. In Part 1 of this series, I had the pleasure of chatting with Frederik Lillelund, CG Supervisor at DNEG Vancouver, about his studio’s work on the project.

DNEG was the lead VFX vendor on the project, working on nearly 600 shots, across multiple internal sites (led by the Vancouver team, and complemented by the Montreal, Mumbai, and London locations). Coming onto the project quite late in the game, they faced an interesting challenge right out of the gate — all the sequences they were involved with had already been shot. As such, as Frederik pointed out “a lot of the looks had to be developed more or less on the fly, while shots were in progress, so we didn’t have the luxury of being able to start development while the filmmakers were still on set. We had to basically just keep up, and keep up with the editorial changes that were coming in left and right.”

Watching the final film, it’s evident that they rose to the occasion, creating spectacular effects, including my favourite: the twins energy state.

The twins effect was gorgeous. Can you elaborate on how that was achieved?

The original brief was “creatures that were made out of some sort of energy”. When you saw them in what was called their “true form”, that was their natural form. They have these powers where they can transform and manipulate matter however they want. Throughout the movie they transform themselves to mimic the appearance of humans, taking on the form of the twins. They basically develop a skin outside of their true form so they can blend in with their surroundings.

The idea was that at some point they would be shot at and we would need to break through the skin, and reveal their true form underneath, for a beat, before they pulled themselves back together. When they use their powers in human form, their eyes also flare up, showing a hint of their natural state.

It was initially a loose brief, with terms like “magical effect” and “true energy”. To get to our final look, we started out in concept, tried different paths, and initially began with something that was more electric, then more fluid based. But then we came up with this idea that once their outer shell gets pulled back, it would reveal an underlying star nebula feel. That seemed to go down well with the clients. Once we started doing tests with that, it became apparent that in order for the audience not to get lost in what was going on in the nebula, we needed to give them an internal structure/nervous system, more akin to human structure. That way, when they appear, you would know which way they were facing, etc.

What were you using as reference?

We went online and found all these amazing images to study from, like the Hubble Space Telescope imagery — where you see distant galaxies and stars. We tried to see if we could recreate some of that in FX. The best way we found to do so was using tons and tons of particles. We took those particles and mapped an entire sheet of attributes to them, getting data for how dense the clusters were, the distance between them and the closest star, etc. This gave us data that we could re-map onto the visible colour spectrum, which allowed us to easily grade and adjust based on client notes (in terms of how colourful and nebula-like they wanted it to feel). Initially we had the nebulas more in the blue and green tones (that you traditionally see on pictures like this), but the clients wanted to shift the hue more into reds, to give more of a sinister feel. Our process allowed us to pretty easily adjust things. On top of this, there were some areas that the light was occluded, which created very contrasty areas and deep colours that the client really liked. It was a huge collaboration between FX and comp — who took the passes and developed the final look for it. There were definitely iterations to get there, but in the end it turned out really well.

Any special processes employed?

Because it was pretty heavy stuff in terms of the massive particle sims, we needed an easy way to visually see how this would turn out before we started running our sims. So we created tools that used simple geometric shapes to visually show how the nebula was going to look. We used that to get the layout locked down and sort out the timing of when the twins would be hit.

A common theme throughout the whole process — we had a lot of work to do, in what seemed like a very short amount of time. We knew on all fronts that we had to be very efficient on how we treated stuff. Apart from laying out the nebula, we needed it to interact with the twins. To do so, we body-tracked the actors, and used that as a collision object (so it would interact where needed). And we used wherever they’d be hit by the lasers as impact points on the body-track (places where they would suck back in when they regenerated).

When you have assets that feature in multiple sequences, it’s very common to have multiple vendors working on the same item — as you each have a different take on things. When someone gets a look that resonates with the client, it gets shared across and then everyone has to match it. We were really fortunate that we basically got to develop that nebula look that you see.

And it was a collaborative process on the eye effect. The client would show us what Sony had done on some of their shots, and we would develop it further from that. It was a bit of a collaboration back-and-forth, through the client, which was pretty fun.

How many different alien species were created for this project?

We had 5 heroes creatures (if you count the twins as one), 8-10 background characters, and 3 characters that got omitted (Bob, Fat Gary and Hank the mole), plus we did augmentation on a few of the on-set extras dressed in prosthetics.

The three main characters that we knew we’d have to do facial animation for were: Guy, Narlene, and Vungus. Apart from that, we also did a lot of concepts for background aliens, most of them designed to populate the London HQ set. We had alien MIB agents, flying gaseous creatures that were full of eyes poking out, etc. There were a lot of very creative and crazy ideas — some of them went through, and some of them (that were maybe too cartoony), were discarded (as the clients wanted to stay somewhat grounded in reality).

And we didn’t just create 2D concepts, we also crafted actual sculpts that we could present as 3D turntables. As expected, they went through a selection process, the client picked out a few that they liked, and we developed these further (created variations, enhanced them, etc.), then we finished building them, took them through lookdev and did animation tests. Unfortunately, we did have a few that made it all the way through into shots but were unfortunately cut (due to editorial or creative reasons, or because once we got them in the shots, they didn’t fit in as well as we thought they would).

The clients also had us do enhancements to on-set prosthetics. They had a lot of extras dressed in all kinds of crazy costumes and prosthetic masks and some of them didn’t hold up in very close-up shots. Some just required touch-ups, while 1-2 of them required full 3D head replacement.

In terms of the alien species, which was the most challenging to create? That was definitely our hero asset, Vungus the Ugly. Our other hero assets, Narlene and Guy, were able to speak, but only required head (for Narlene) or upper body and head (for Guy) replacements. Vungus was a full-on 3D character, who had to interact with all the other characters — he needed cloth, costume, groom, facial, etc.

Getting approval on the final design for Vungus was quite a long process, which ended up limiting us in terms of time to actually work on the asset. He went through quite a few design changes to get the right balance of him being ugly, but not too ugly, alien, but not too alien; sometimes he needed to be more human, but again, not too human. When we originally started, we were given concepts from another vendor who had started on it and we were asked to expand upon that. We created various concept models of him, explored personalities, different hairstyles, props, clothing, facial expressions, proportion changes, you name it.

But in the end it actually went full circle and we came back to the original concept art that they had chosen. Vungus was still very much a character in development though, so we worked from the approved concept, exploring the character and making some changes to enable it to function with the range of motion required. We had a version with hair, and one without, before the call was made to have him bald. And his eyes (colour, iris proportion) changed throughout the process too, as the filmmakers wanted him to mimic more of what the actors looked like. Even his hands were a development process. There were some hero close-up shots where they would feature, so we did a few variants (e.g. changing number of fingers, finger layout, colours and finger nail variants).

Getting final approval required us to present the asset more or less through the entire pipeline. The client basically wanted him posed, in a shot, lit, with facial expressions, groom, costume, prepped plates, etc. Right up until we actually showed the first shots where his performance was fully rendered, we weren’t completely sure whether they would like it and if it was 100% locked down. Thankfully, we have an amazing build team. They were able to keep up with the endless adjustments and we ended up creating something pretty cool!

How many design iterations were there for him? I don’t remember the exact number, but there were definitely quite a few. And they were taken quite far before we were told to go back again. The fact that he’s a blue alien and the environment that we first present him in is blue, made things challenging as well. We had to show the client that we could come up with creative ways of rendering him, so that he wouldn’t get completely lost in the background.

How about facial animation?

As soon as his look was approved, we had to get in there and create all the face shapes that we needed for the face rig. I think we created upwards of 320 unique blendshapes. We then took those and split them into different sections of his face, giving the animators even more control to achieve what they wanted to do. In the end I think he had about 1000+ different shapes that we could play with.

The whole process was done in a very tight timeframe. This meant we had to get into a workflow where we were working on shots and animation was in progress, while the build team was still creating some of the face shapes needed for specific shots. As soon as new face shapes were complete, the facial rig had to be updated. And repeat. We needed to race as quickly as we possibly could before we had to put our pens down. It was a bit hectic at times and required strong communication between departments.

Which sequence or shot was the trickiest?

In general, the ones that Vungus features in, both inside and outside of the nightclub. Especially the sequence outside the nightclub that has Vungus in it, big effects work (the twins), crash interaction with the car and the set, MIB weapons, and the twins’ powers (sending a road wave down the street, crashing everything in its path).

Another big challenge for that sequence was the environment itself. The sequence was shot in two locations: on-site in London, at Ludgate Hill, and on set. Whenever they required stunts or effects that couldn’t be done on the streets of London, they had a green screen set. That’s where they tried to replicate the city street and the nearby shops around the area where all the action is happening. We got data from both locations, but there were some noticeable differences between them. Scans sometimes didn’t match exactly with the takes, items were moved around, and we needed to create a lot of props for interactions with cars. It was quite a job merging all that together and making it look like it was seamless in one street. At some point we even had our London department go out and capture additional footage, just to make sure we were prepared to deal with all the different situations that might arise when we’d finally see the cut. But in the end it turned out great — our environment team did an amazing job!

Were there any new tools or techniques created or employed for this show?

Due to the time constraints and heavy performance animation needed for Vungus, we were focused on optimizations in terms of pipeline and workflow efficiency between departments, especially for creatures. At the same time, the team were really passionate about pushing the quality and look of our assets. As such, new tools were developed and a lot of effort was put into creating those extra small details that add so much to the final look.

DNEG hasn’t had that many creature-heavy shows so far, but that’s definitely an area that’s heavily in development. Given the amount of blendshapes that we needed for this show, we had to optimize our creature tools, which laid down groundwork that will benefit us in the future. I can see it helping with the next show I’m on, that features similar creature needs.

How was it working in parallel with so many other vendors on this movie?

We actually developed quite a close relationship with some of the vendors. For example, Territory Studios was tasked with creating all the holograms and graphics for the project. Like us, they came onto the show quite late.

We developed a process with them where they would create elements and then we would populate that throughout our sequences — taking their look and re-creating it on our side (as they were working in different software than us). In some cases they would give us direct files and textures that we could then use on our side, and in some cases we had to create 3D assets to accommodate what was needed. Working on holograms in this way wasn’t something that we had originally planned to do. This show was constantly evolving, more so as we got closer and closer to the deadline — with various hands coming up here and there to make it all work.

Did different sites of DNEG handle different aspects of the show?

Alessandro Ongaro, our VFX supervisor, and Janet Yale, producer on the show led the project from here in Vancouver. And we had our Montreal, Mumbai, and London teams helping us work around the clock on the project.

Because we needed such a quick turnaround on our hero assets, they needed to be done by our Vancouver build team. They created Vungus, Guy, and Narlene. Basically, we did all the creatures here in Vancouver. And building out the London HQ was handled by our Mumbai team. They also created various digi doubles, including those for H and M. Given that there were a lot of heavy CFX and FX shots on this project, we drove the shots that required a lot of development/tech-heavy work, from Vancouver. We created hero shots here and propagated them out to our other internal sites, so they had a reference point.

Usually you try to make things as painless as possible in that it’s easier for one site to deal with an enclosed sequence. But we had quite a few sequences where multiple sites were working across the same sequence. Making sure everyone felt supported and that we were dealing with things correctly was important. Communication was key to making sure that happened.

What are you most proud of on this project?

I’m really happy with what we were able to pull off both technically and creatively and the amount of work we achieved on the show overall. Given how much there was to support and manage, and the time constraints that we were up against, this was quite a feat. As for visually, as you hinted at, the look of the nebula turned out really really awesome and everybody really loved how Vungus turned out. We had an amazingly talented group of artists working on the show, in all departments, across many locations, making this all possible.

![]()

That’s it for Part 1 of this series. Thanks so much to Frederik for sharing insights on DNEG’s stunning work on this project. I can’t wait to see what you get up to next!

And stay tuned for Part 2, the final installment in this series, where I chat with Craig McPherson, Animation Supervisor at Sony Pictures Imageworks, about bringing Pawny and the Hive creature to life.

MIB – © 2019 CTMG, Inc. All Rights Reserved.

|

Jessica Fernandes is an adventurer and wordsmith based in Vancouver. She enjoys spreading appreciation for the arts through stories and encounters with inspiring creators. |

![]()

![]()

© 2025 · Spark CG Society